One of the more polarizing topics in investing is the valuation of Tesla stock. Its peers among the Magnificent 7 big tech leaders sport price/earnings ratios mainly in the 30s. Those are high numbers, but growth stocks deserve high P/Es. A way to normalize for expected growth of earnings is to look at the Price/Earnings/Growth (PEG) ratio. This number is usually 1.5-2.0 for a well-regarded company. Anything much over 2 is considered overvalued.

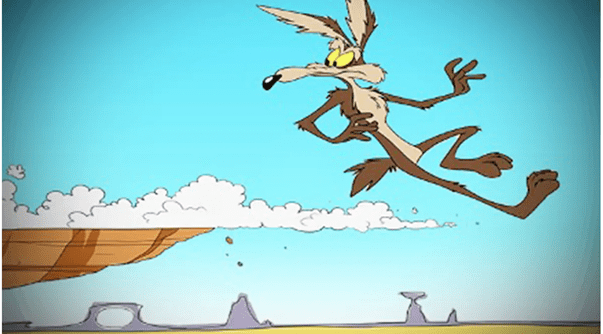

Tesla’s forward P/E of about 270 is nearly ten times higher than peers. Its anticipated growth rate does not seem to justify this astronomical valuation, since its PEG of around 4-10 (depending on assumptions) is way higher than normal. This seems to be a case of the CEO’s personal charisma dazzling shareholders. There is always a new “story” coming out to keep the momentum going.

Tesla’s main actual business is selling cars, electric cars. It has done a pretty good job at this over the past decade, supported by massive government subsidies. With the phasing out of these subsidies by the U.S. and some other governments, and increasing competition from other electric carmakers, it seems unlikely that this business will grow exponentially. Ditto for its smallish ($10 billion revenue) business line of supplying large batteries for electric power storage. But to Tesla fans, that doesn’t really matter. Tesla is valued, not as a car company, but as an AI startup venture. Just over the horizon are driverless robo-taxis (whose full deployment keeps getting pushed back), and humanoid Optimus robots. The total addressable market numbers being bandied about for the robots are in the trillions of dollars.

Source: Wikipedia

From Musk’s latest conference call:

Optimus is Tesla’s bipedal humanoid robot that’s in development but not yet commercially deployed. Musk has previously said the robots will be so sophisticated that they can serve as factory workers or babysitters….“Optimus will be an incredible surgeon,” Musk said on Wednesday. He said that with Optimus and self driving, “you can actually create a world where there is no poverty, where everyone has access to the finest medical care.”

Given the state of Artificial General Intelligence, I remain skeptical that such a robot will be deployed in large numbers within the next five years. It is of course a mind-bending exercise to imagine a world where $50,000 robots could do anything humans can do. Would that be a world where there is “no poverty”, or a world where there is no wealth (apart from the robot owners)? Would there be a populist groundswell to nationalize the robots in order to socialize the android bounty? But I digress.

On the Seeking Alpha website, one can find various bearish articles with the self-explanatory titles of, for instance, Tesla: The Dream Factory On Wall Street, Tesla: Rallying On Robotaxi Hopium, and Tesla: Paying Software Multiples For A Car Business – Strong Sell . There are also bullish pieces, e.g. here, here, and here.

Musk’s personal interaction with shares has propped up their value. He purchased about $1 billion in TSLA shares in September. This is chicken feed relative to its market cap and his net worth, but it apparently wowed TSLA fans, and popped the share price. What seems even more inexplicable is the favorable response to a proposed $1 trillion (!!) pay package for Elon. For him to be awarded this amount, Tesla under his watch would have to achieve hefty boosts both in physical production and in stock market capitalization. But… said package would be highly dilutive (like 12%) to existing shareholders, so, rationally they should give it thumbs down. However, it seems likely that said shareholders are so convinced of Musk’s value that they will approve this pay package on Nov 6, since he has hinted he might leave if he doesn’t get it.

Such is the Musk mystique that shareholders seem to feel that giving him an even greater stake in Tesla than he already has will cause hundreds of billions of dollars of earnings appear from thin air. From the chatter I read from Wall Street professionals, they view all this as ridiculous magical thinking, yet they do not dare place bets against the Musk fanbase: the short interest in TSLA stock is only a modest 2.2%. Tesla is grossly overvalued, but it will likely remain that way as long as Elon remains and keeps spinning grand visions of the future.