The basic idea is that we want to compare the performance of different portfolios or their managers. This is relatively easy as long as the portfolios contain the same assets. Then, the portfolios are simply characterized by the different weights among the different assets. But how do we compare the performance of portfolios whose assets are different? In finance, we usually assume that everyone can invest in everything. But there are plenty of cases in which that’s a bad assumption: when clients want exposure to particular industries, when there are statutory limitations on holding certain assets, or when an individual company is considering specific projects within the same company under conditions of scarce financing.

The most primitive step is to compare the return and standard deviation of two different portfolios. However, higher risk investments tend to have higher returns in dynamic equilibrium. So, if we were to compare the returns of a tech company to a utility company, then we’d often see the tech companies performing better. But, if we compare the volatilities, then the utility companies would tend to perform better. Sharpe stepped in with a ratio to express the excess return (benefit) per standard deviation (the cost). This way, we can compare the price of volatilities between two portfolios. We’ll stick with just these basic 3 measures: return, standard deviation, and Sharpe ratio. (Others do exist)

Let’s put some meat on this with an example. Say that we have two portfolios, each composed of different assets. There’s a utility portfolio that’s composed of NEE, DUK, and SO. There’s also a tech portfolio that’s composed of AMD, MSFT, and NVDA. Both portfolios have weights of (0.33, 0.33, 0.34). The results of the utility versus the tech portfolio are:

- Returns: 14.2% vs 136.3%

- Standard Deviation: 14.9% vs 32%

- Sharpe: 0.684 vs 4.134

Goodness me! The tech portfolio returns much more in absolute terms and much more per unit of risk. It’s twice as volatile as the utility portfolio, but the returns are almost ten times as high. If you could, then many of us would choose the tech portfolio over the utility portfolio. But, what if, for one reason or another, you can only invest in one of the two industries? Or, what if you want to invest your money with a skilled manager, rather than a risky one?

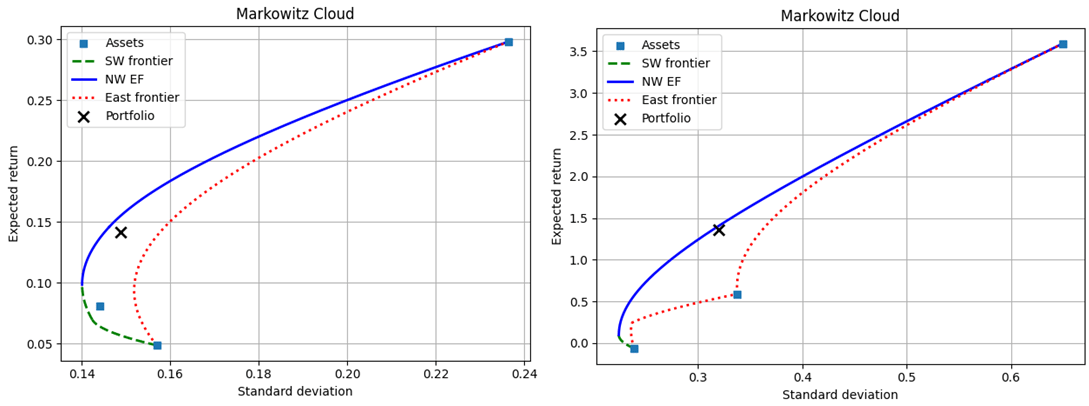

One way to tackle this problem is to introduce the Markowitz cloud. Specifically, we can essentially list out all of the possible portfolios along with their return and standard deviations. Then, we can compare the actual performance to the entire menu of possible performances within each set of assets. Below are the possible performances for the utility (left) versus the tech (right) portfolio. The actual portfolios are marked with an X.

One way to evaluate the two portfolios is to compare their return, standard deviation, and Sharpe ratio to the other candidates that were achievable with the same assets. As we can see, conditional on the assets, neither portfolio minimized the volatility, maximized return, nor maximized the Sharpe ratio. Furthermore, assuming that the realized rate of return was the goal, neither portfolio minimized the conditional volatility. Assuming that the realized volatility was the goal, neither portfolio maximized the conditional return. Below are two tables that describe some candidate alternatives and how they differ from the realized portfolio.

Continue reading