Who benefits from trade between the US and China? If China subsidizes their exporting industries, should the US see this as a threat that undermines our industries, or thank China for lowering prices for US consumers? Does it matter that China runs a persistent trade surplus (exporting more than they import), while the US runs a persistent trade deficit?

Everyone has a take on these questions, but the answers I hear even among economists rarely draw from the leading modern models in the international trade literature. Krugman (1980) (10k citations) shows how large home markets matter for industries with increasing returns to scale. In a simple increasing returns model, unlike with Econ 101 comparative advantage, temporary subsidies can permanently flip which country an industry efficiently operates in.

Melitz (2003) (20k citations) extends the Krugman model to include firm-level productivity differences. Rubini (2014) extends the Melitz model to include innovation. Now Xiao (2025) has extended the Rubini model to include unbalanced trade, then calibrated the model with data from the US and China. Now that the mathematical models are able to incorporate more and more features of the real world, what do they show?

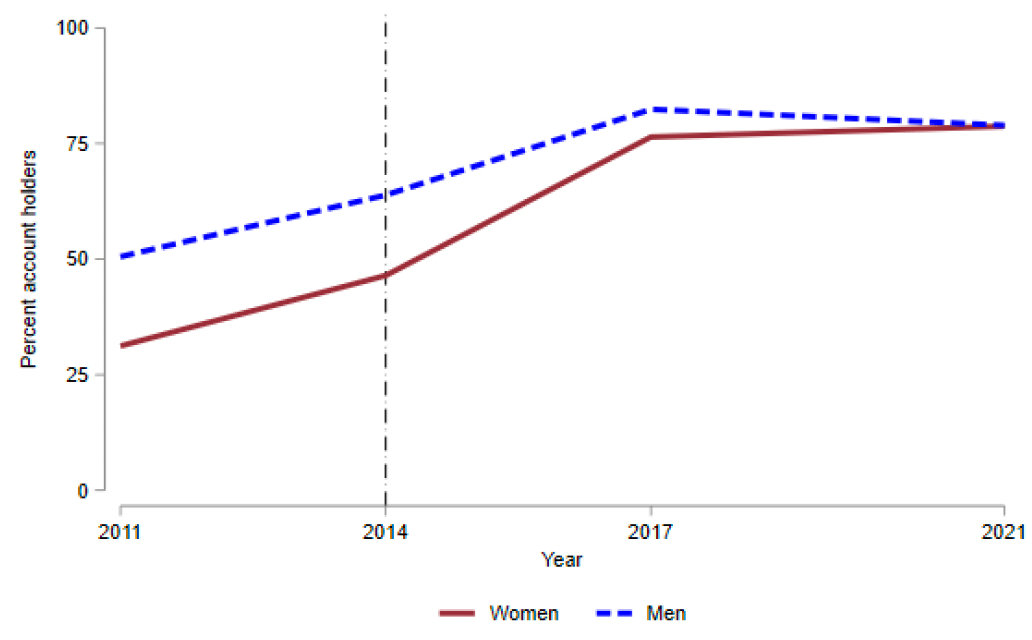

China’s trade surplus and the US trade deficit have tradeoffs. Specifically, China’s trade surplus leads them to be more productive than they otherwise would be, but have lower welfare, because so much of the fruit of their production is enjoyed by other countries. Conversely the US trade deficit leads us to produce less than we otherwise would, but to have higher welfare thanks to consumers enjoying the cheaper foreign goods.

In one sense this recapitulates some of the same debates people had without the math. Some people like trade because it benefits US consumers and overall present-day US wellbeing. Some don’t like it because it harms US manufacturing and our resiliency in any potential future conflict.

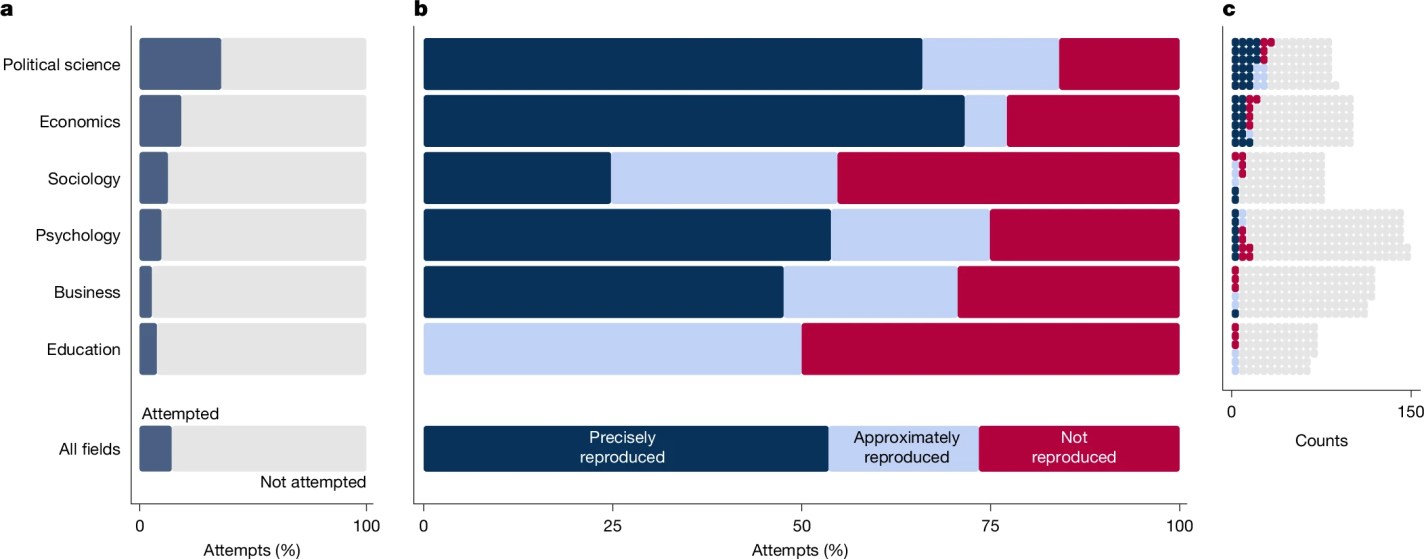

One advantage of the models is that it puts numbers on the tradeoffs. In this case, the welfare benefit to the US may be small relative to China’s welfare loss and relative to both countries’ productivity changes:

the average productivity increase caused by trade surplus ranges from 1.2 percentage points to 5.46 percentage points when the innovation cost changes. These results explain China’s long-term export promotion policies and align with its new policy goal of developing “new productivity forces”. I also identify a negative effect on China’s trade partners’ productivity (namely, the US), of between -2.74 percentage points and -5.89 percentage points. This comes at a welfare cost, equivalent to between 3 percentage points and 5.7 percentage points of consumption units. Correspondingly, China’s cheaper goods increase welfare in the US by between 0.26 percentage points and 1.22 percentage points

In addition to the big complex model, Xiao’s paper shares nice background on the sheer size of Chinese export subsidies, noting that they account for 2/3 of all manufacturing subsidies in G20 countries, and that export tax rebates are almost 2/3 as large as Chinese net exports. In short, China’s trade surplus is not simply driven by differing preferences and production capabilities across countries, but is largely driven by deliberate policy choices.

P.S. The paper’s author, Aochen Xiao, is on the econ job market.