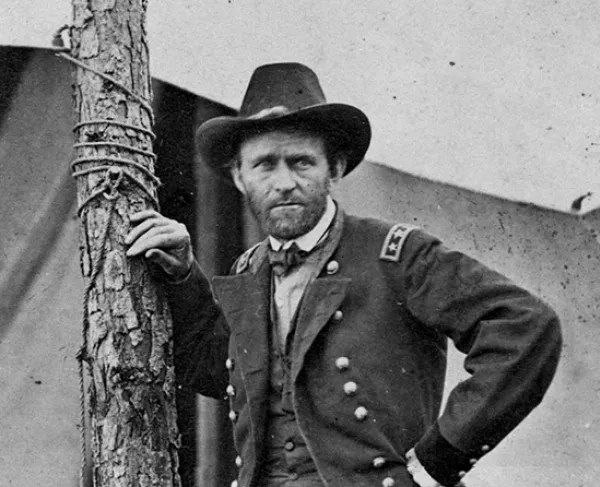

This is the fifth in a series of occasional blog posts on individual initiatives that made a strategic (not just tactical) difference in the course of the second world war. There were many good generals in WWII, but for the most part they were good just by not being bad. Eisenhower, for instance, did a fine job as the supreme commander in the European theater, but that involved mainly being diplomatic and avoiding dumb mistakes, not strategic brilliance. Also, for the most part, no general was so good or bad that his conduct swayed the ultimate outcome of war.

I see Soviet general Georgy Zhukov as an exception here. The Soviet Union came very close to collapse under the weight of German assault in 1941-42. If the Nazis had won there and consolidated their hold over Russian resources, I doubt whether the Western Allies could have successfully liberated Europe; they barely hung on for the first few weeks of the 1944 Normandy invasion, and that was with most of the German forces off fighting the Russians. Zhukov is seen as the strategic mastermind behind four key Soviet victories in 1939-1942 that allowed the Soviet Union to survive, and then go on the offensive.

The first of these victories was a battle that no one remembers much anymore, but it had enormous strategic ramifications. In 1938-39, the Japanese kept expanding their occupation of territory at the border of China, towards Mongolia (a Soviet satellite). The Japanese had beaten the Russians in 1905, and trounced, massacred and occupied the Chinese all through the 1930s. So, they were pretty cocky. There were several inconclusive battles between Japanese and Soviet forces at the border. Finally, in August 1939, the newly-arrived General Zhukov decided to put an end to it at the Khalgin Gol river, which is otherwise in the middle of nowhere. The usual approach to achieving victory was to mass a huge number of your troops and hurl them at the enemy position. Sure, half your guys would die during the attack, but the rest might break through.

But Zhukov did something very different. Over the course of weeks, he stealthily built up highly mobile forces on the far left and right flanks, hiding the tanks under camouflage. Then he opened the attack with an assault on the middle, aided by enormous artillery and air bombardment, while laying low on the flanks. After the Japanese had pulled their forces in to reinforce the center, he unleashed his armored columns, which raced around behind the Japanese force to cut it off. Since the Japanese declined to surrender, they were annihilated. Such is the power of a well-executed pincers attack, also known as double envelopment.

The magnitude of this defeat led Japan to totally abandon plans to further expand to the north, with two key consequences. First, the cessation of a threat from Japan allowed the Soviets to transfer troops from the Far East just in time to stop the Germans in the west. Also, Japan decided to expand instead to the south, which led to their fateful decision to attack Pearl Harbor in an effort to prevent America from interfering there.

An early objective of the Germans when they invaded the Soviet Union in June, 1941 was Leningrad, the second-largest Soviet city. The city was nearly surrounded by Axis forces by the time Zhukov arrived to take over in September. Zhukov restored discipline (threatening execution for unauthorized withdrawal), fortified defenses, and launched counter-attacks that stabilized the front lines, preventing an immediate German assault. He oversaw the evacuation of 1.5 million civilians, the relocation of critical industry, and opening a critical lifeline for supplies. This allowed Leningrad to hang on by a thread for some 900 days of siege, and kept a significant number of German soldiers tied up there.

The Germans then refocused on taking Moscow, far to the east of Leningrad. They almost succeeded, getting to within 10 miles of the Kremlin. Zhukov was rushed to Moscow in October, 1941 to organize the defense there, which he did with his characteristic energy. He mobilized some 250,000 women and teenagers to dig three rings of defensive fortifications. After months of costly back and forth fighting, in the depth the coldest winter of the twentieth century, Zhukov unleashed a pincers counterattack, which forced the Germans back over 100 miles to avoid encirclement. One result of this victorious counterattack was that Moscow was saved. The other result was also extremely important – – in the wake of this major German defeat, Hitler assumed direct operational command over the German army. This was a complete disaster. The centralization of command negated the German army’s greatest strengths: its flexible command structure and the professional competence of its General Staff. Hitler’s refusal to allow tactical withdrawals led to the encirclement and destruction of entire armies

The Germans in 1942 decided to focus their efforts in southern Russia, to seize control of vital oil fields in the Caucasus region. The city of Stalingrad, with its considerable industrial and armaments industry and it’s prestigious name lay along the way, so the Germans aimed to occupy it. The Soviets kept up a desperate defense in the city ruins, but the elite German Sixth Army kept advancing until they had taken some 90% of the city. But their almost-victory was turned into a stunning defeat. Zhukov, now in charge of the defense of Stalingrad, stealthily built up enormous forces on the far flanks of the Stalingrad front, where the Axis lines were thinly held by poorly-equipped Romanian and Hungarian troops. In a reprise of his Khalgin Gol maneuver, Zhukov sent his two flanking forces smashing through these lines, and meeting to seal off the 330,000 Axis troops in or near Stalingrad. The Germans tried to relieve this pocket, but the Russian winter and resources were against them. Soviet victory was complete, an entire German army was eliminated (killed/captured), and the initiative on the Eastern Front passed to the Soviets for the rest of the war.

A Few Caveats

What I wrote above was been gleaned from various tertiary source, including Wikipedia, which reflect the common consensus in the West. In the interest of accuracy, however, I note that some of this brilliant, heroic story is a self-serving myth promulgated by the Soviets as a morale booster, and by Zhukov himself in his not-quite-truthful memoir. There is good reason to believe that he takes credit for what was actually the work of others, especially in the planning of the Stalingrad counter-offensive.

Zhukov was mainly responsible for the planning and execution of the disastrous 1942-43 Rzhev offensive, where he threw division after division into fruitless attacks. This exercise cost the Red Army some 1 million casualties, while accomplishing almost nothing. This campaign was largely written out of Soviet history, and Zhukov carefully downplayed his role in it.

That campaign highlighted Zhukov’s famous utter disregard for human lives. Another window into that disregard comes from Dwight Eisenhower’s memoirs. Eisenhower records his surprise during a conversation with Zhukov, when the Soviet general explained matter-of-factly how he sometimes cleared a path through a minefield by making some of his soldiers run through the minefield in a line to get the pesky mines blown up. Soviet soldiers faced a 100% chance of being executed for disobeying orders, versus some small chance of making it through the minefield without getting their legs blown off.

Finally, the regime Zhukov defended was arguably as horrible as the regime he fought against. Nevertheless, I suspect the world that would have resulted had Georgy Zhukov failed would have been worse than the actual world of say 1945.

Marshal Georgy Zhukov at a post-war parade in Moscow. (Image source: WikiMedia Commons)