One of Anthropic’s biggest wins has been its wildly-popular Claude Code program, that can do nearly all the grunt work of programming. Properly prompted, it can build new features, migrate databases, fix errors, and automate workflows.

So, it was big news in the AI world last week when an Anthropic employee accidently exposed a link that allowed folks to download the source code for this crown jewel – – the entire code, all 512,000 lines of it, which revealed the complete logic flow of the program, down to the tiniest features. For instance, Claude Code scans for profanity and negative phrases like “this sucks” to discern user sentiment, and tries to adjust for user frustration.

Gleeful researchers, competitors, and hackers promptly downloaded zillions of copies. Anthropic issued broad copyright takedown requests, but the damage was done. Researchers quickly used AI to rewrite the original TypeScript source code into Python and Rust, claiming to get around copyright laws on the original code. Oh, the iron: for years, AI purveyors have been arguing that when they ingest the contents of every published work (including copyrighted works) and repackage them, that’s OK. So now Anthropic is tasting the other side of that claim.

The leak has been damaging to Anthropic to some degree. Competitors don’t have to work to try to reverse engineer Claude Code, since now they know exactly how it works. Hackers have been quick to exploit vulnerabilities revealed by the leak. And Anthropic’s claim to be all about “Safety First” has been tarnished.

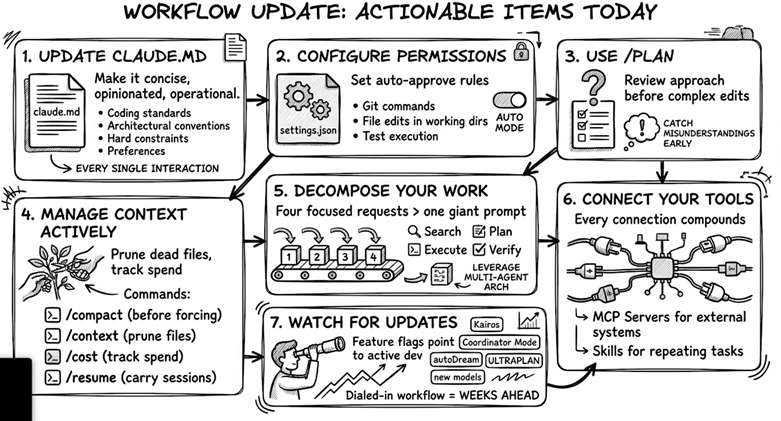

On the other hand, the model weights weren’t exposed, so you can’t just run the leaked code and get Claude’s results. Also, no customer data was revealed. Power users have been able to discern from the source how to run Claude Code most advantageously. This YouTube by Nick Puru discussed such optimizations, which he summarized in this roadmap:

There have actually been a number of unexpected benefits of the leak for Anthropic. Per AI:

Brand resonance and community engagement have surged, with some observers calling the incident “peak anthropic energy” that generated significant hype and validated the product’s technical impressiveness. The leak has acted as a massive free marketing campaign, reinforcing the narrative of a fast-moving, innovative company while bouncing the brand back among developers despite the security lapse.

Accelerated ecosystem adoption and bug fixing are also potential benefits, as the exposure allowed engineers to dissect the agentic harness and create open-source versions or “harnesses” that keep users within the Anthropic ecosystem. Additionally, the public scrutiny likely helps identify and patch vulnerabilities faster, while the leaked source maps provide a roadmap for competitors to build “Claude-like” agents, potentially standardizing the market around Anthropic’s architectural patterns.

The leak also revealed hidden roadmap features that build anticipation, such as:

- Kairos: A persistent background daemon for continuous operation.

- Proactive Mode: A feature allowing the AI to act without explicit user prompts.

- Terminal Pets: Playful, personality-driven interfaces to increase user engagement.

Because of these benefits, conspiracy theorists have proposed that Anthropic leaked the code on purpose, or even (April Fools!) leaked fake code. Fact checkers have come to the rescue to debunk the conspiracy claims. But in the humans vs. AI debate, this whole kerfuffle doesn’t make humans look so great.