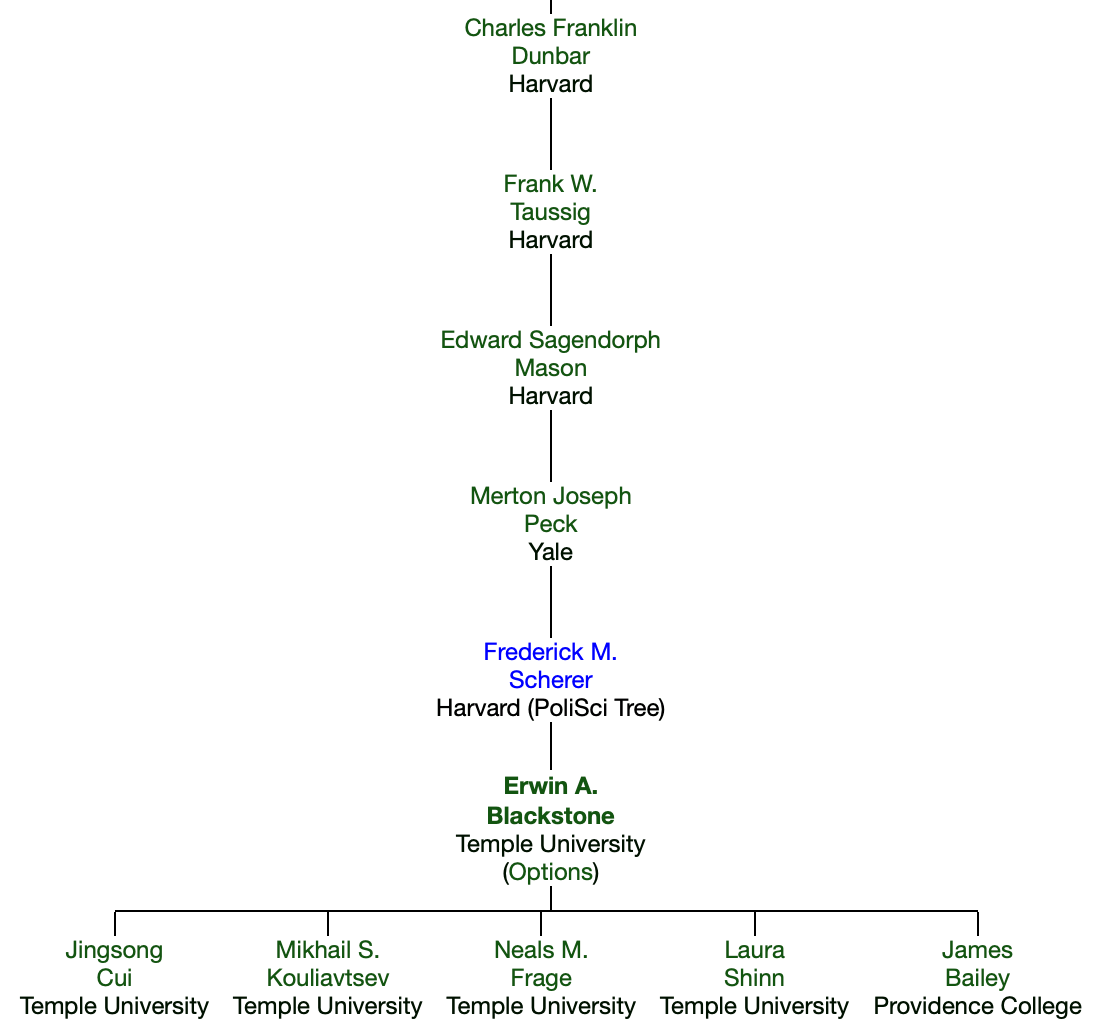

I’m told that Professor Erwin Blackstone died earlier this year, but I haven’t been able to find anything like an obituary online; consider this a personal memorial.

I knew Dr. Blackstone first as the professor of my Industrial Organization class at Temple University, where he taught since 1976. He was a model of how to take students seriously and treat them respectfully; he always called on us as “Mr./Ms. Last Name” and thought carefully about our questions.

Of course I learned all sorts of particular things about IO, especially US antitrust law and history- from Judge Learned Hand and baseball’s antitrust exemption to current merger guidelines and cases. I would later ask Dr. Blackstone to join my thesis committee, where he would heavily mark up my papers with comments and critiques.

He was a key part of how I was able to become a health economist despite the fact that Temple lacked a true health economist on the tenure-track economics faculty while I was there (as opposed to IO or labor economists who did some health). Blackstone’s coauthor Joseph Fuhr– a true health economist who also had Blackstone on the committee of his 1980 dissertation- came part-time to teach graduate health economics. Blackstone and Fuhr worked together to write the health economics field exam I took.

Finally, I learned from Blackstone by reading his papers. While he wrote many on health economics, my personal favorite was his work with Andrew Buck and Simon Hakim on foster care and adoption. It convincingly demonstrated the problems of having one fixed price in an area that most people don’t think about as a “price” at all- adoption fees. Having one fairly high fee for all children means the few seen as most desirable by adopting parents (typically younger, whiter, healthier) get adopted quickly, while those seen as less desirable by would-be adoptive parents linger in foster care for years. Like much of his work, it pairs a simple economic insight with a rich explanation of the relevant institutional details.

Academics hope to live on through our work- through our writing and the people we taught. Having taught many thousands of students at Cornell, Dartmouth, and Temple over 55 years, served on dozens of dissertation committees, and published over 50 papers and several books, I expect that it will be a long, long time before Erwin Blackstone is forgotten.