In the Fall of 2020, I blogged about how I introduce students to text mining, as part of a data analytics class.

Could Turing ever have imagined that a human seeking customer service from a bank could chat with a bot? Maybe text mining is a big advance over chess, but it only took about one decade longer for a computer (developed by IBM) to beat a human in Jeopardy. Winning Jeopardy requires the computer to get meaning from a sentence of words. Computers have already moved way beyond playing a game show to natural language processing.

https://economistwritingeveryday.com/2020/11/07/introducing-students-to-text-mining/

I told the students that “chat bots” are getting better and NLP is advancing. By July 2020, OpenAI had released a beta API playground to external developers to play with GPT-3, but I did not sign up to use it myself.

In April of 2022, I added some slides inspired by Alex’s post about the Turing Test that included output from Google’s Pathway Languages Model. According to Alex, “It seems obvious that the computer is reasoning.”

This week in class, I did something that few people could have imagined 5 years ago. I signed into the free new GPTChat function in class and typed in questions from my students.

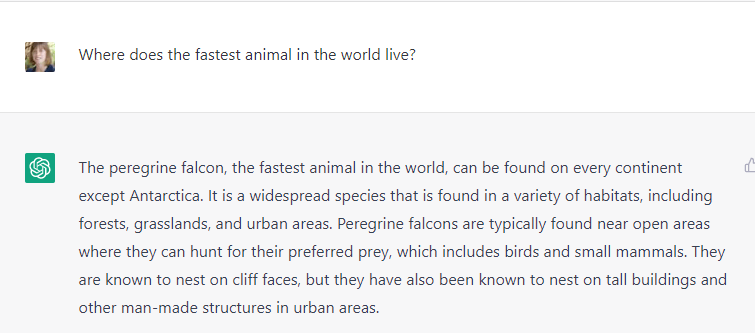

We started with questions that we assumed would be easy to answer:

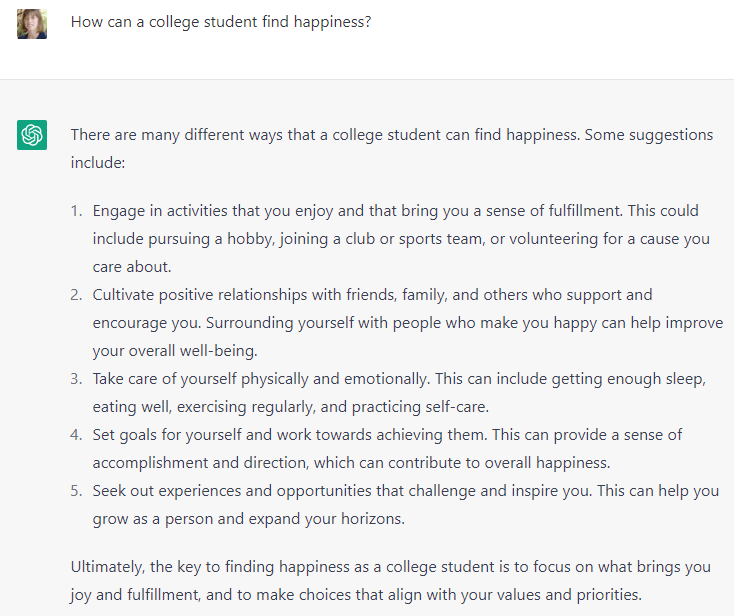

Then we were surprised that it answered a question we had thought would be difficult:

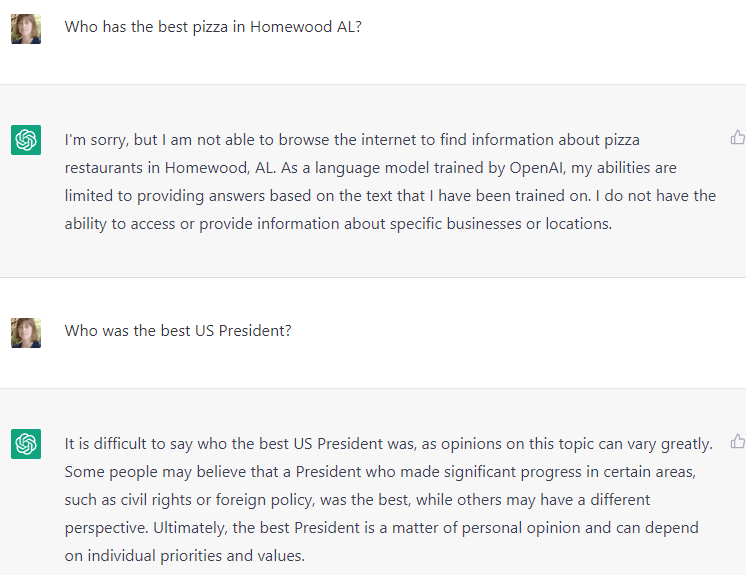

And then we asked two questions that prompted the program to hedge, although for different reasons.

It seems like the model is smarter than it lets on. For now, the creators are trying hard not to offend anyone or get in the way of Google’s advertising business. Overall, the quality of the answers are high.

Because of when I was born, I believe that something I have published will make it into the training data for these models. Will that turn out to be more significant than any human readers we can attract?

Of course, GPT can still make mistakes. I’m horrified by this mischaracterization of my tweets:

https://substack.com/inbox/post/88017506 12/8/2022 “How come GPT can seem so brilliant one minute and so breathtakingly dumb the next?”

LikeLike

Tyler points us at https://marginalrevolution.com/marginalrevolution/2024/08/the-wisdom-of-gwern-why-should-you-write.html “Much of the value of writing done recently or now is simply to get stuff into LLMs.” from https://www.lesswrong.com/posts/PQaZiATafCh7n5Luf/gwern-s-shortform#KAtgQZZyadwMitWtb

LikeLike