70,000: that’s the number of adults (age 25 and older) in the US that earned the federal minimum wage of $7.25 per hour in 2021.

Another 538,000 adults reported earning below the minimum wage, but these are likely to be workers that earn tips, which aren’t reported in their hourly wages. Legally, they must make at least $7.25 including their tips, though many of them earn more. The data comes from a 2022 report by BLS using CPS data (hopefully the 2023 report is coming out soon).

If we include all workers 16 and older, there are about 1.1 million people earning the federal minimum wage or less. That’s just 1.4% of hourly wage earners, and only 0.8% of all workers (including salaried workers). Crucially, this number has declined dramatically over time from a high of 15.1% of hourly wage earners (8.9% of all workers) in 1981. It has even declined significantly since 2010, the first full year that the $7.25 federal minimum was in effect, when 6% of hourly wage earners (3.5% of all workers) earned $7.25 or lower.

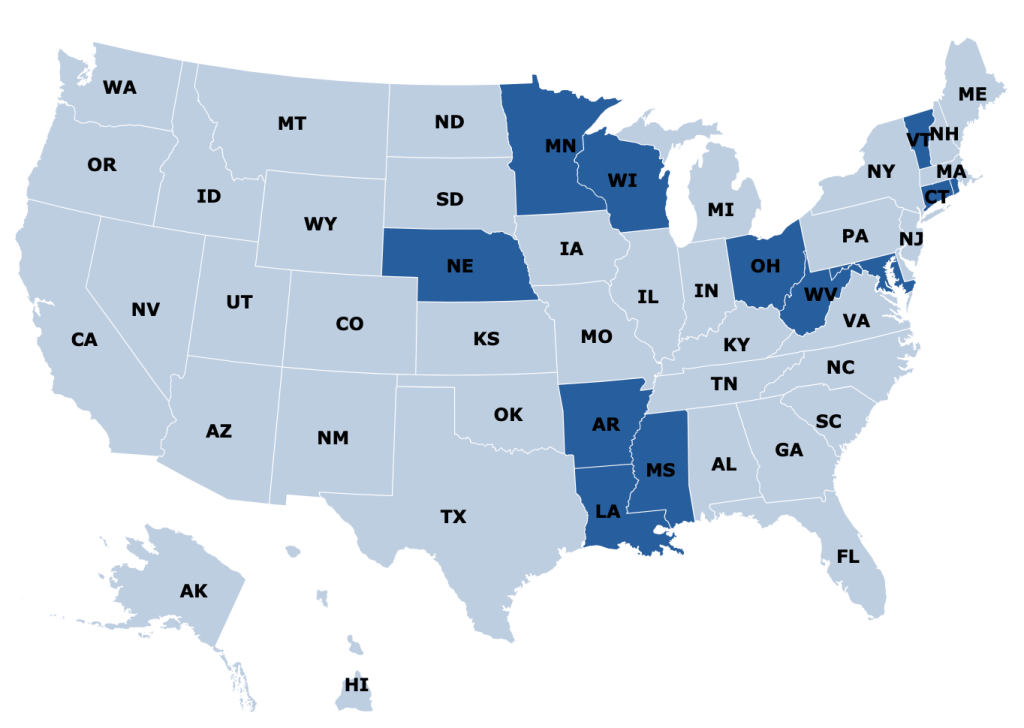

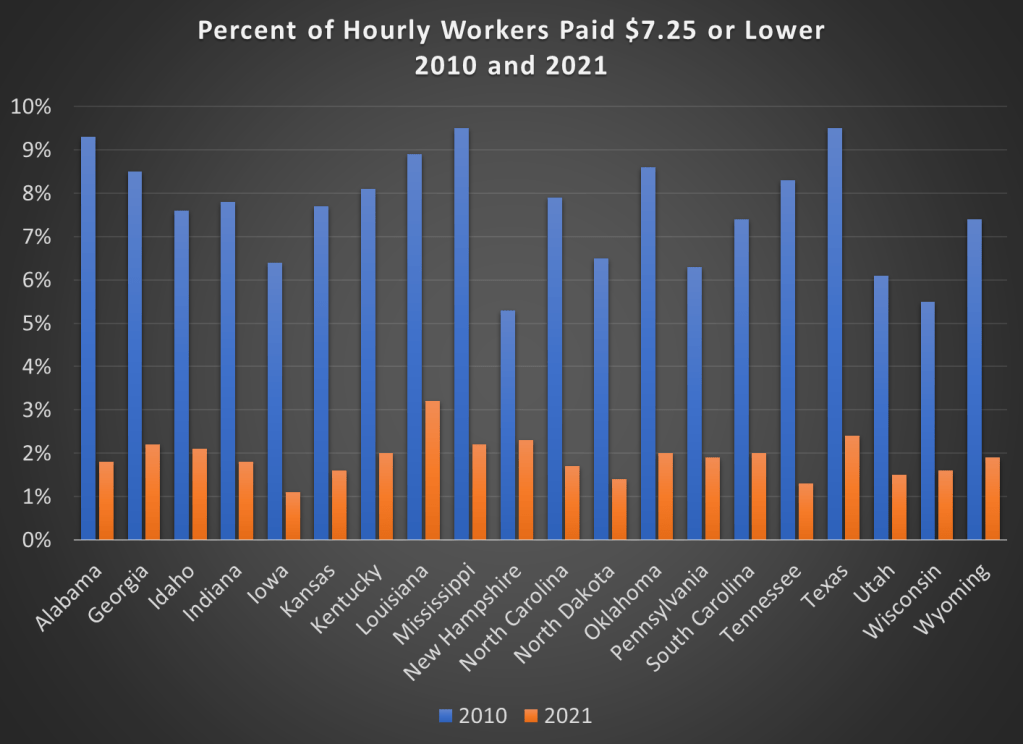

Perhaps, though, a big part of this decline is because most states (and even some cities and counties) now have minimum wages that are above the federal level, in some cases significantly above. Today, only 20 states use the federal minimum wage. No doubt this is important!

However, even if we focus just on those 20 states that use $7.25 per hour as the minimum, there were also large declines in the percent of hourly wage earners that earned $7.25 or less. Some states declined by 7 percentage points or more from 2010 to 2021, though all declined by at least 3 percentage points.

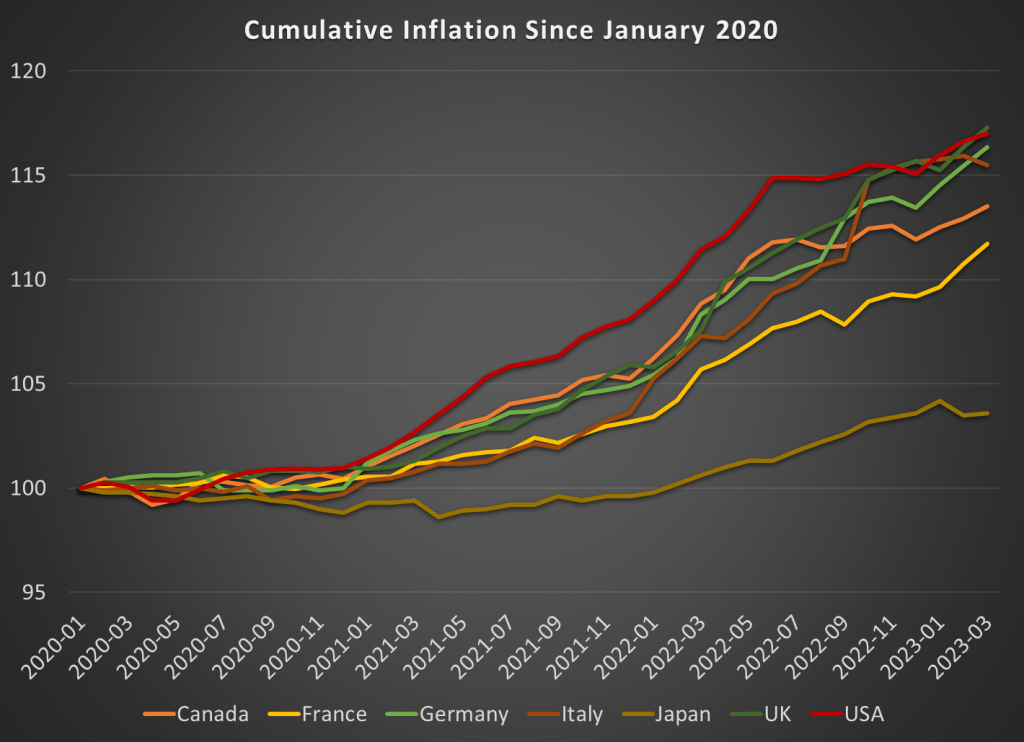

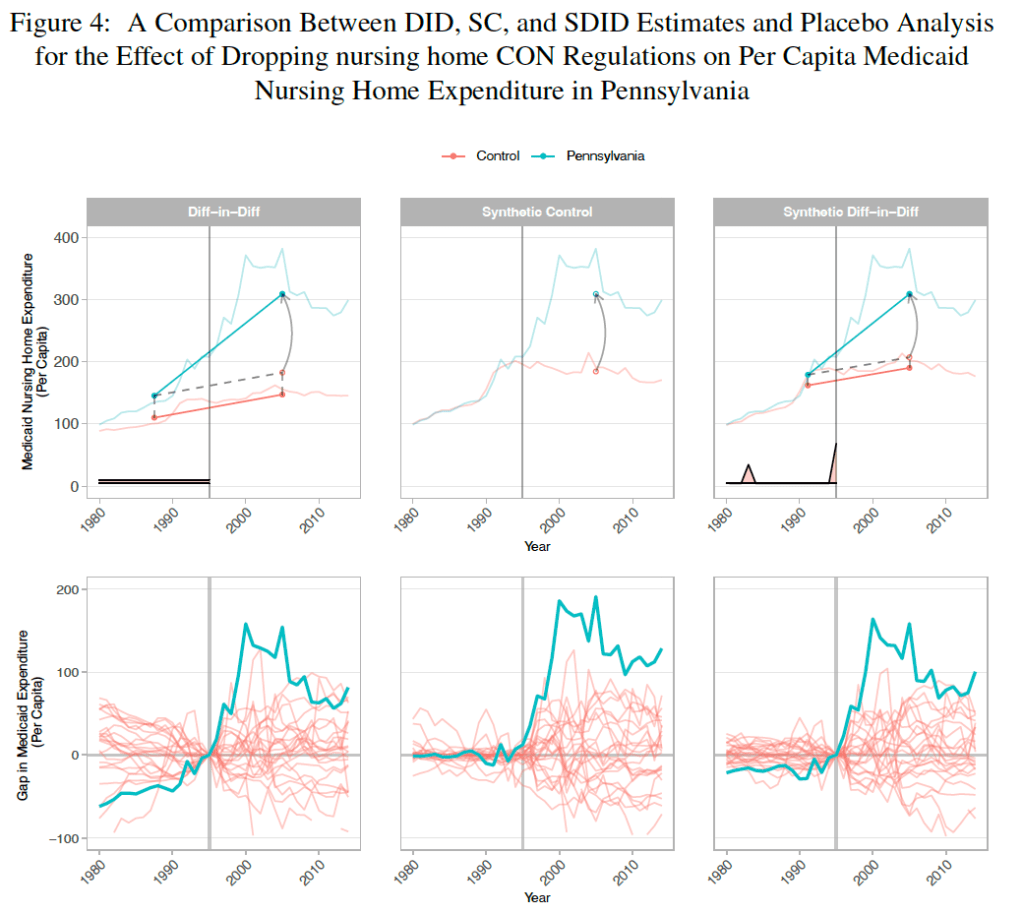

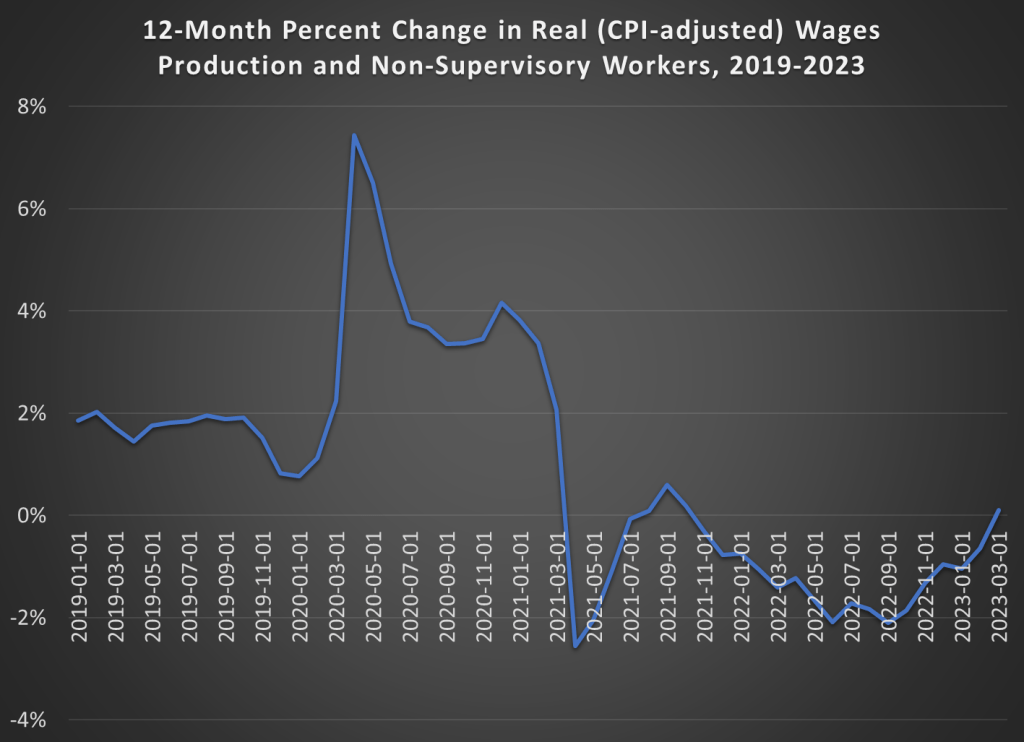

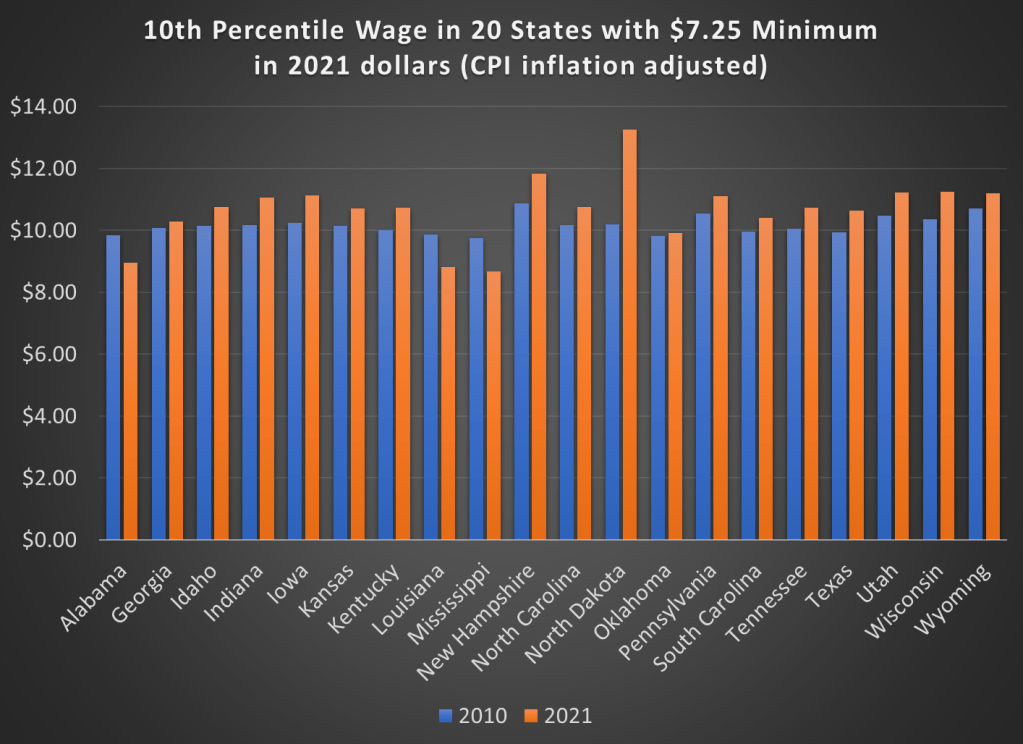

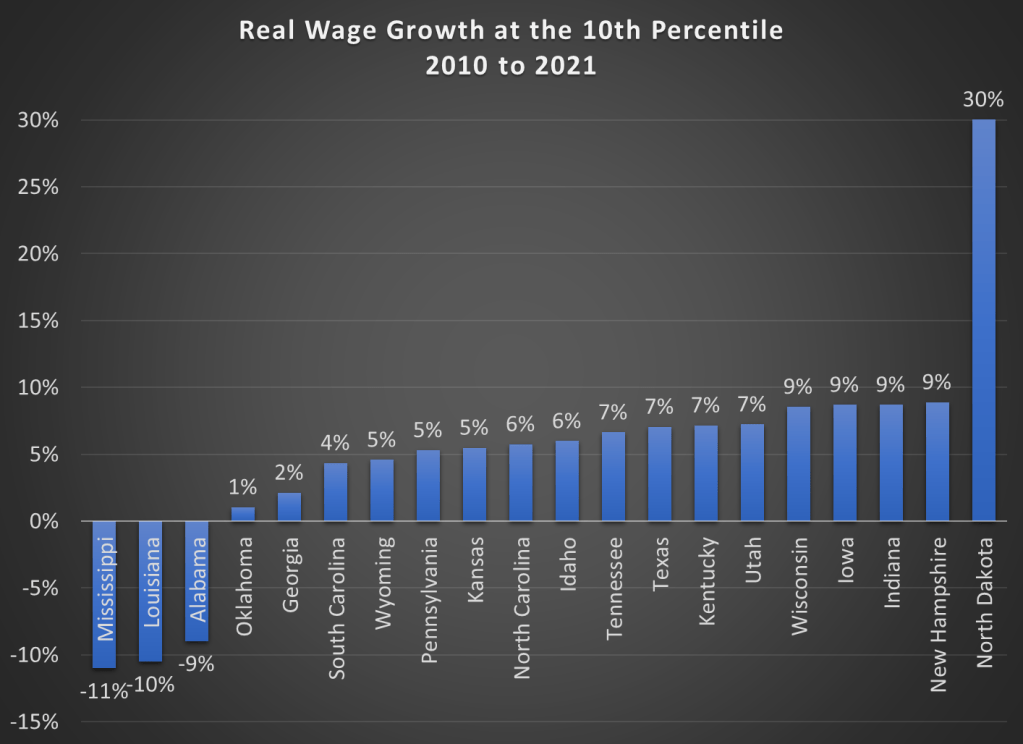

But maybe what’s going on is that employers are just providing wage increases that keep up with price inflation. So while fewer workers are earning the federal minimum wage, maybe they are no better off. We can address that possibility using BLS’s occupational wage data, which allows us to look at wages at the 10th percentile (these aren’t exactly minimum wage earners, but they are close). Real wage declines did happen in a few states (Alabama, Louisiana, and Mississippi), but most of these states experienced clear real wage growth from 2010 to 2021 at the 10th percentile of earners.

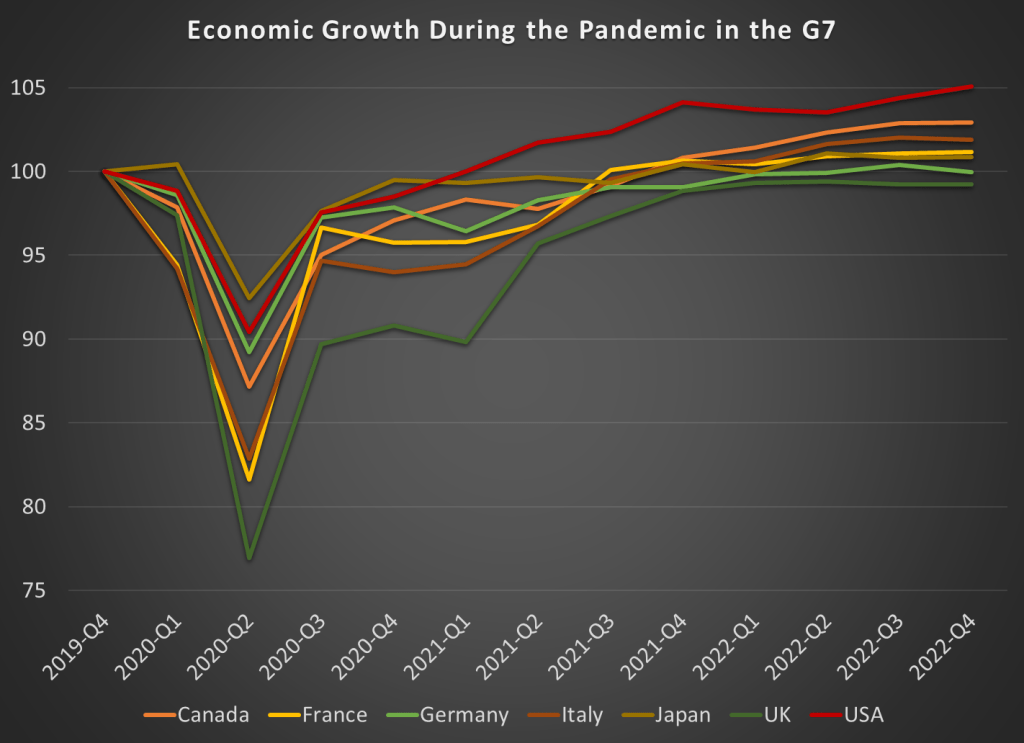

Here are the changes in percentage terms (once again, adjusted for CPI inflation).

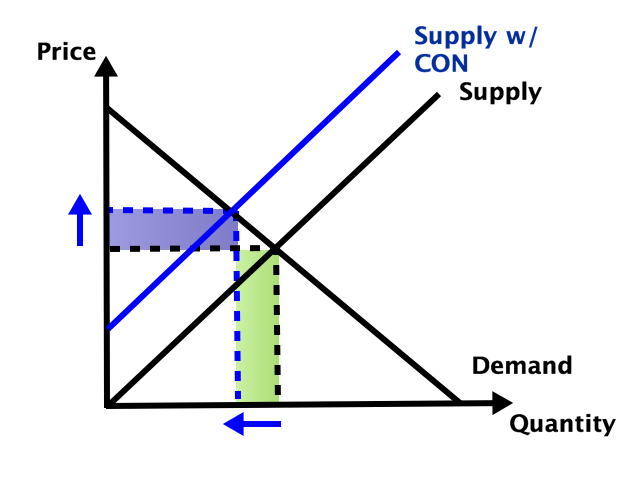

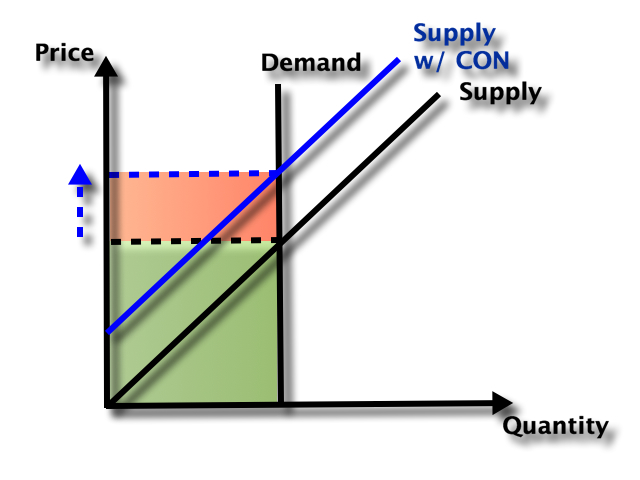

Some might look at this growing irrelevance of the minimum wage as a reason to increase the federal minimum wage. But as the data from most states suggests, there are clear increases in wages happening already, suggesting that these are competitive labor markets. The case for raising the legal minimum wage in a competitive labor market is weak (it is stronger in a monopsony labor market).