This is the second of two blog posts on my paper “Willingness to be Paid: Who Trains for Tech Jobs”. Follow this link to download the paper from Labour Economics (free until November 27, 2022).

Last week I focused on the main results from the paper:

- Women did not reject a short-term computer programming job at a higher rate than men.

- For the incentivized portions of the experiment, women had the same reservation wage to program. Women also seemed equally confident in their ability after a belief elicitation.

- The main gender-related outcomes were, surprisingly, null results. I ran the experiment three times with slightly different subject pools.

- However, I did find that women might be less likely to pursue programming outside of the experiment based on their self-reported survey answers. Women are more likely to say they are “not confident” and more likely to say that they expect harassment in a tech career.

- In all three experiments, the attribute that best predicted whether someone would program is if they say they enjoy programming. This subjective attitude appears more important even than having taken classes previously.

- Along with “enjoy programming” or “like math”, subjects who have a high opportunity cost of time were less willing to return to the experiment to do programming at a given wage level.

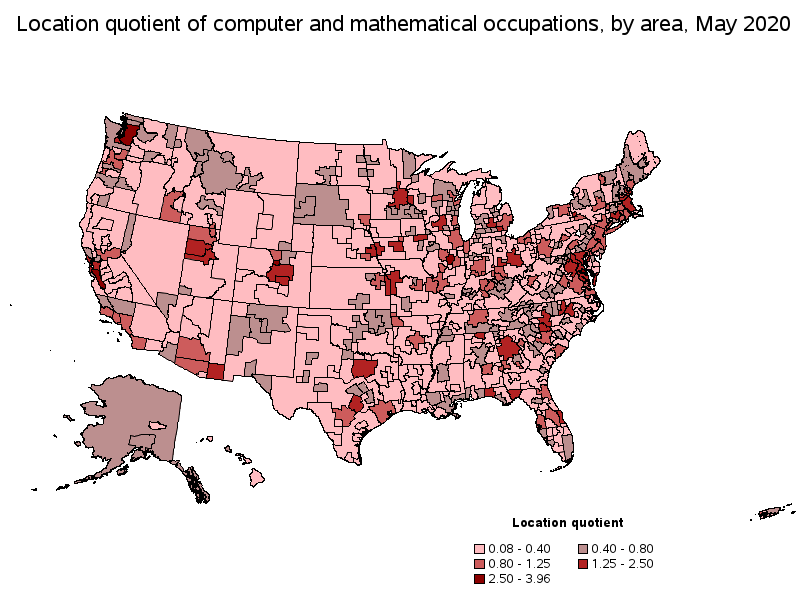

I wrote this paper partly written to understand why more people are not attracted to the tech sector where wages are high. This recent tweet indicates that, although perhaps more young people are training for tech than ever before, the market price for labor is still quite high.

The neat thing about controlled experiments is that you can randomly assign treatment conditions to subjects. This post is about what happened after adding either extra information or providing encouragement to some subjects.

Informed by reading the policy literature, I assumed that a lack of confidence was a barrier to pursuing tech. A large study done by Google in 2013 suggested that women who major in computer science were influenced by encouragement.

I provided an encouraging message to two treatment groups. The long version of this encouraging message was:

If you have never done computer programming before, don’t worry. Other students with no experience have been able to complete the training and pass the quiz.

Not only did this not have a significant positive effect on willingness to program, but there is some indication that it made subjects less confident and less willing to program. For example, in the “High Stakes” experiment, the reservation wage for subjects who had seen the encouraging message was $13 more than for the control subjects.

My experiment does not prove that encouragement never matters, of course. Most people think that a certain type of encouragement nudges behavior. My results could serve as a cautionary tale for policy makers who would like to scale up encouragement. John List’s latest book The Voltage Effect discusses the difficulty of delivering effective interventions at scale.

The other randomly assigned intervention was extra information, called INFO. Subjects in the INFO treatment saw a sample programming quiz question. Instead of just knowing that they would be doing “computer programming,” they saw some chunks of R code with an explanation. In theory, someone who is not familiar with computer programming could be reassured by this excerpt. My results show that INFO did not affect behavior. Today, most people know what programming is already. About half of subjects said that they had already taken a class that taught programming. Perhaps, if there are opportunities for educating young adults, it would be in career paths rather than just the technical basics.

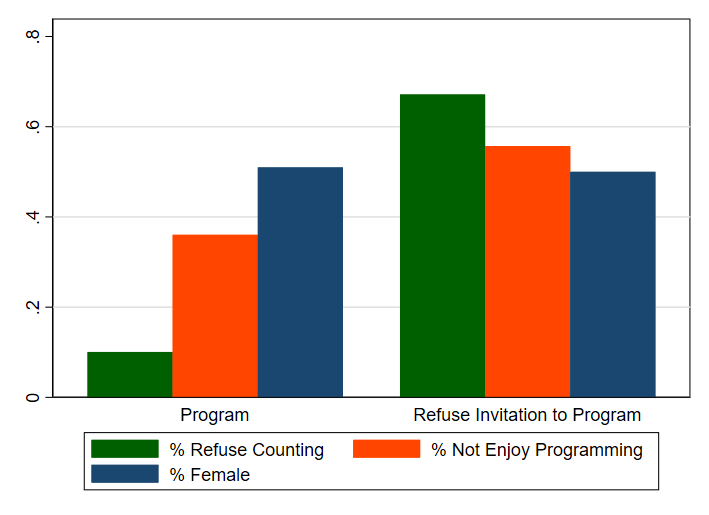

Since the differences between treatments turned out to be negligible, I pooled all of my data (686 subjects total) for certain types of analysis. In the graph below, I group every subject as either someone who accepted the programming follow-up job or as someone who refused to return to program at any wage. Recall that the highest wage level I offered was considerably higher on a per-hour basis than what I expect their outside earning option to be.

I’ll discuss the three features in this graph in what appear to be the order of importance for predicting whether someone wants to program. There was an enormous difference in the percent of people who were willing to return for an easy tedious task that I call Counting. By inviting all of these subjects to return to count at the same hourly rate as the programming job, I got a rough measure of their opportunity cost of time. Someone with a high opportunity cost of time is less likely to take me up on the programming job. This might seem very predictable, but this is a large part of the reason why more Americans are not going into tech.

Considering the first batch of 310 subjects, I have a very clean comparison between the programming reservation wage and the reservation wage for counting. People who do not enjoy programming require a higher payment to program than they do to return for the counting job. Self-reported enjoyment is a very significant factor. The orange bar in the graph shows that the majority of people who accepted the programming job say that they enjoy programming.

Lastly, the blue bar shows the percent of female subjects in each group. The gender split is nearly the same. As I show several ways in the paper, there is a surprising lack of a gender gap for incentivized decisions.

I hope that my experiment will inspire more work in this area. Experiments are neat because this is something that someone could try to replicate with a different group of subjects or with a change to the design. Interesting gaps could open up between subject types under new circumstances.

The topic of skill problems in the US represents something reasonably new for labor market and public policy discussions. It is difficult to think of a labor market issue where academic research or even research using standard academic techniques has played such a small role, where parties with a material interest in the outcomes have so dominated the discussion, where the quality of evidence and discussion has been so poor, and where the stakes are potentially so large.

Cappelli, PH, 2015. Skill gaps, skill shortages, and skill mismatches: evidence and arguments for the United States. ILR Rev. 68 (2), 251–290.