David Friedman recently got into an online debate with Walter Block that could be seen as a boxing match between “Austrian economics” and the “Chicago School of Economics”. In the wake of this debate, Friedman assembled his thoughts in this piece which is supposed (if I understand properly) to be published as a chapter in an edited volume. Upon reading this piece, I thought it worthy of providing my thoughts in part because I see myself as being both a member of both schools of thought and in part because I specialize in economic history. And here is the claim I want to make: I don’t see any meaningful difference between both and I don’t understand why there are perpetual attempts to create a distinction.

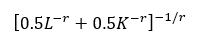

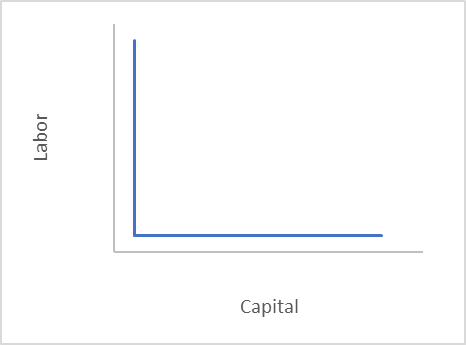

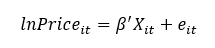

But before that, let’s do a simple summary of the two views according to Friedman (which is the first part of the essay). The “Chicago” version is that you can build theoretical models and then test them. If the model is not confirmed, it could be because a) you used incorrect data, b) relied on incorrect assumptions, c) relied on an incorrect econometric specification. The Austrian version is that you derive axioms of human action and that is it. The real world cannot be in contradiction with the axioms and it only serves to provide pedagogical illustrations. That is the way Friedman puts the differences between the schools of thought. The direct implication from this difference is that there cannot be (or there is no point to) empirical/econometric work in the Austrian school’s thinking.

Now, I understand that this is the viewpoint shared by many — as noticed by a shared distrust of econometrics and mathematical depictions of the economy among Austrian-school scholars. In fact, Rothard was pretty clear about this in an underappreciated book he authored, the A History of Money and Banking in the United States. But I do not understand why.

After all, all models are true if they are logically consistent. I can go to my blackboard and draw up a model of the economy and make predictions about behavior. That is what the Austrians do! The problem is that predictions rely on assumptions. For example, we say that a monopoly grant is welfare-reducing. However, when there are monopolies over common-access resources (fisheries for example), they are welfare-enhancing since the monopoly does not want to deplete the resource and compete against its future self. All we tweaked was one assumption about the type of good being monopolized. Moreover, I can get the same result as the conventional logic regarding monopolies by tweaking one more assumption regarding time discounting. Indeed, a monopoly over a common access resource is welfare-enhancing as long as the monopolist values the future stream of income more than than the future value of the present income. In other words, something on the brink of starvation might not care much about not having fish tomorrow if he makes it to tomorrow.

If I were to test the claims above, I could get a wide variety of results (here are some conflicting examples from Canadian economic history of fisheries) regarding the effects of monopoly. All of these apparent contradictions result from the nature of the assumptions and whether they apply to each case studied. In this case, the empirical part is totally in line with the Austrian view. Indeed, empirical work is simply telling which of these assumptions apply in case X, Y, or Z. In this way of viewing things, all debates about methods (e.g. endogeneity bias, selection bias, measurement, level of data observation) are debates about how to properly represent theories. Nothing more, nothing less.

It is a most Austrian thing to start with a clear model and then test predictions to see if the model applies to a particular question. A good example is the Giffen-good. The Giffen good can theoretically exist but we have yet to find one that convinces a majority of economist. Ergo, the Giffen good is theoretically true but it is also an irrelevant imaginary pink unicorn. Empirically, the Giffen good has simply failed to materialize over hundreds of papers in top journals.

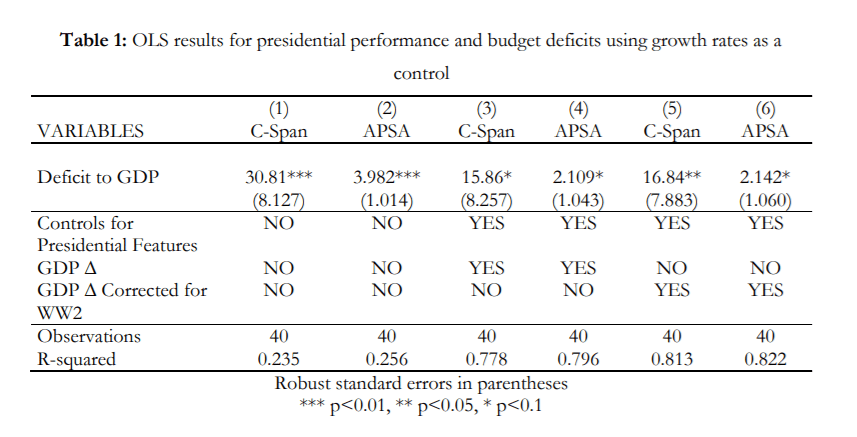

In fact, I see great value to using empirical work in an Austrian lens. Indeed, I have written articles (one is a revise and resubmit at Public Choice, another is published in Review of Austrian Economics and another is forthcoming at Essays in Economic and Business History) using econometric methods such as difference-in-difference and a form of regression discontinuity to test the relevance of the theory of the dynamics of interventionism (which proposes that government intervention is a cumulative process of disequilibrium that planners cannot foresee). n each of these articles, I believe I demonstrated that the theory has some meaningful abilities to predict the destabilizing nature of government interventions. When I started writing these articles, I believed that the body of theory I was using was true because it was logically consistent. However, I was willing to accept that it could be irrelevant or generally not applicable.

In other words, you can see why I fail to perceive any meaningful difference between Austrian theory and other schools of economic thought. For year, I realized I was one of the few to see like this and I never understood why. A few months ago, I think I put my finger on the “why” after reading a forthcoming piece by my colleague Mark Koyama: Austrians assume econometrics to be synonymous with economic planning.

I admit that I have read Mises’ Theory and History and came out not understanding why Austrians think that Mises admonished the use of econometrics. What I read was more of the domain of the reaction to the use econometrics for planning and policy-making. Econometrics can be used to answer questions of applicability without in any way rejecting any of the Austrian framework. Maybe I am an oddball, but I was a fellow Austrian traveler when I entered the LSE and remained one as I learned to use econometrics. I never saw any conflict between using quantitative methods and Austrian theory. I only saw a conflict when I spoke to extreme Rothbardians who seemed to conflate the use of tools to weigh theories and the use of econometrics to make public policy. The former is desirable while the latter is to be shunned. Maybe it is time for Austrians to realize that there is good reason to reject econometrics as a tool to “plan” the economy (which I do) and accept econometrics as a tool of study and test. After all, methods are tools and tools are not inherently bad/good — its how we use them that matters.

That’s it, that’s all I had to say.