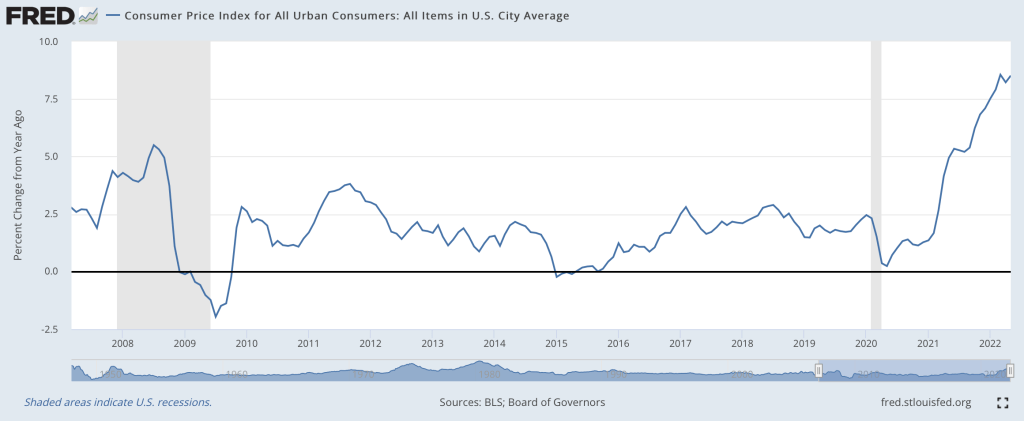

I think so, though the path back to 2% is a long one. Two months ago I wrote that “the Fed is still under-reacting to inflation“. We’ve had an eventful two months since; last Friday the BLS announced CPI prices rose 1% just in May, and that:

The all items index increased 8.6 percent for the 12 months ending May, the largest 12-month increase since the period ending December 1981

Then this Wednesday the Fed announced they were raising interest rates by 0.75%, the biggest increase since 1994, despite having said after their last meeting that they weren’t considering increases above 0.5%. I don’t like their communications strategy, but I do like their actions this month. This change in the Fed’s stance is one reason I think we’re at or near the peak.

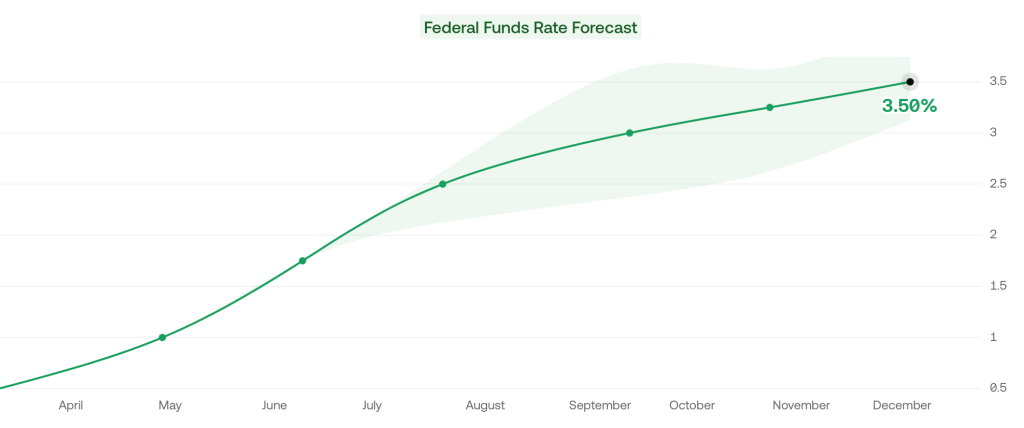

Its not just what the Fed did this week, its the change in their plans going forward. As of April, the Fed said the Fed Funds rate would be 1.75% in December, and markets thought it would be 2.5%. But now the Fed and markets both project 3.5% rates in December.

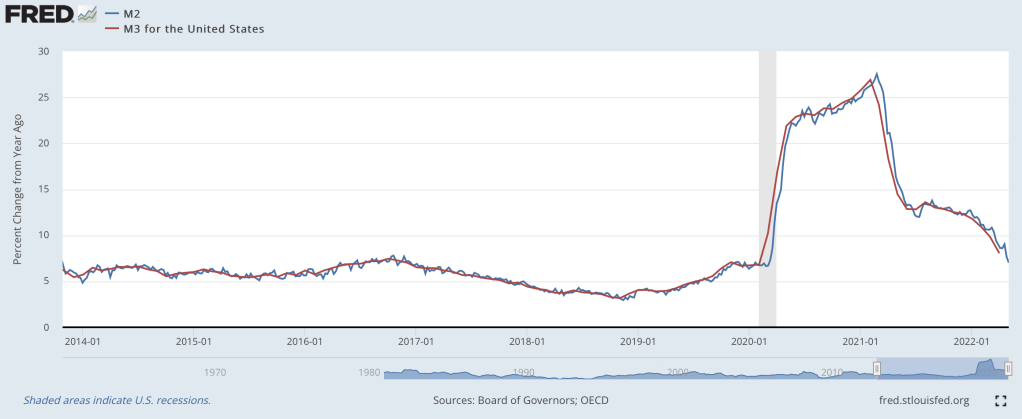

The other reason I’m optimistic is that the days of rapid money supply growth continue to get further behind us. From March to May 2020, the M2 and M3 supply exploded, growing at the fastest pace in at least 40 years:

Rapid inflation began about 12 months later. But the rate of money supply growth peaked in February 2021, then began a rapid decline. Based on the latest data from April 2022, money supply growth is down to 8%, a bit high but finally back to a normal range. Money supply changes famously influence prices with “long and variable lags”, so its hard to call the top precisely. But the fact that we’re now 15 months past the peak of money supply growth (and have stable monetary velocity) is encouraging. Old-fashioned money supply is the same indicator that led Lars Christiansen to predict this high inflation in April 2021 after successfully predicting low inflation post-2009 (many people got one of those calls right, but very few got both).

Stocks also entered an official bear market this week (down 20% from highs), which is both a sign of excess money no longer pumping up markets, and a cause of lower demand going forward.

Markets seem to agree with my update: 5-year breakevens have fallen from a high of 3.6% back in March down to 2.9% today, implying 2.9% average inflation over the next 5 years. Much improved, though as I said at the top the path to 2% will be a long one- think years, not months. Even the Fed expects inflation to be over 5% at the end of this year, and for it to fall only to 2.6% next year.

What am I still worried about? The Producer Price Index is still growing at 20%. The Fed is raising rates quickly now but their balance sheet is still over twice its pre-Covid level and is shrinking very slowly. The Russia-Ukraine war drags on, keeping oil and gas prices high, and we likely still have yet to see its full impact on food prices. Making good predictions is hard.

While I’m sticking my neck out, I’ll make one more prediction, though this one is easier- Dems are in for a bad time in November. A new president’s party generally does badly at his first midterm, as in 2018 and 2010. But this time the economy will be a huge drag on top of that. November is late enough that the real economy will be notably slowed by the Fed’s inflation-fighting effects, but not so late that inflation will be under control (I expect it to be lower than today but still above 5%). Markets currently predict a 75% chance that Republicans take the House and Senate in November, and if anything that seems low to me.