This week the Census Bureau released their annual update on “Income, Poverty and Health Insurance Coverage in the United States.” This release is always exciting for researchers, because it involves as massive release of data based on a fairly large (75,000 household) sample with detailed questions about income and related matters. For non-specialists, it also generates some of the most commonly used national data on income and poverty. Have you heard of the poverty rate? It’s from this data. How about median household income? Also from this data.

I’ll focus on income data in this post, though there is a lot you could say about poverty and health insurance too. The headline result on median income is, once again, a dismal one. Whether you look at median household income (very commonly reported, even though I don’t like this measure) or median family income (which I prefer), both are down from 2021 to 2022 when adjusted for inflation. Both are still down noticeably from the pre-pandemic high in 2019 (though both are also above 2018 — we aren’t quite back to the Great Depression or Dark Ages, folks!).

These headline results are bad. There is no way to sugarcoat or “on the other hand” those results. And these results are probably more robust and representative than other measures of average or median earnings, since they aren’t subject to “composition effects” — when those with zero wages in one period don’t show up in the data. I will note that these results are for 2022, and we are highly likely to see a turnaround when we get the 2023 data in about a year (inflation has slowed to less than wage growth in 2023).

But given that obviously bad headline result, was there any good data? As I mentioned above, a ton of data, sliced many different ways, is released with this report. Some of it also gives us consistent data back decades, in some cases to the 1940s. What else can we learn from this data release?

Median Income by Race

When we look at median income by race, there are a few silver linings. The headline data from Census tells us that only the drop in household income for White, Non-Hispanics was statistically significant. For other races and ethnicities, the changes were not statistically significant from 2021 to 2022 — and some of those changes were actually positive. We shouldn’t dismiss White, Non-Hispanics — they are the largest racial/ethnic group! — but it is useful to look at others.

Black household and families are the most interesting to look at in more detail, especially because they are the poorest large racial group in the US. Black household and family income increased from 2021 to 2022, although the increase was small enough that we can’t say it is statistically significant (remember, this is a sample, not the universe of the decennial Census).

But what’s more important is that median Black household income is now at the highest level it has ever been (adjusted for inflation, as always). Median Black household income is about $1,000, or around 2 percent higher than in 2019 — the peak date for overall median income. Two percent growth over 3 years is nothing to shout from the rooftops, but it is very different from White, Non-Hispanic households, which are down over 6 percent since 2019.

Median black family income is roughly flat since 2019, but it is up about 1.5 percent in the past year — not quite as robust, but still better than the overall numbers.

Historical Income Data

The other silver lining I always like to mention is the long-run historical data. This data often gets overlooked in the obsessive focus on the most recent changes, so it’s useful to sit back and look at how far we have come. Let’s start where we just left off, with Black families. I wrote a post back in February about Black family income, which had data current through 2021, but it’s useful once again to look at the data with another year (plus they have updated the inflation adjustments for 2000 onward).

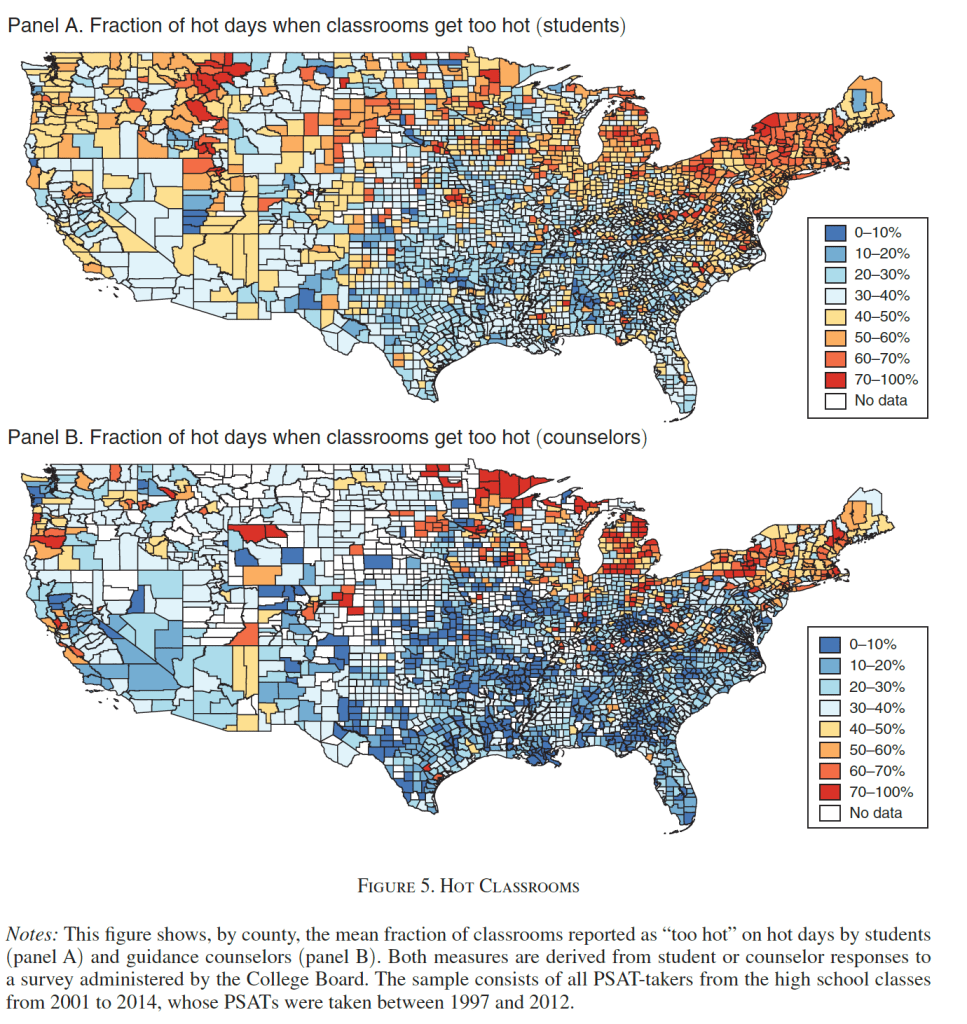

The chart shows the percent of Black families that are in three income groups, using total money income data. The data is adjusted for inflation. The progress is dramatic. In 1967, the first year available, half of Black families had incomes under $35,000. By 2022 that number had been cut in half to just one quarter of families (the 2022 number is the lowest on record, even beating 2019). Twenty-five percent is still very high, especially when compared to White, Non-Hispanics (it’s about 12 percent), but it’s still massive progress. It’s even a 10-percentage point drop from just 10 years ago. And Black families haven’t just moved up a little bit: the “middle class” group (between $35,000 and $100,000) has been pretty stable in the mid-40 percentages, while the number of rich (over $100,000) Black families has grown dramatically, from just 5 percent to over 30 percent.

We saw earlier that progress for White, Non-Hispanics has stumbled in the past 3 years, but the long run data is much more optimistic (this data starts in 1972).

The progress here should be evident too, but let me highlight one thing for emphasis: as far back as 1999, the largest of these three groups was the “rich” (over $100,000 group). And since 2017, the upper income group has been the majority, with median White Non-Hispanic family income surpassing $100,000 in 2017, up from $70,000 at the beginning of the series in the early 1970s (all inflation adjusted, of course).

The next question I often get with this historical data is: How much of this increase is due to the rise of two-income households. Well, this same data release allows us to look at that data too! This final chart shows median family income for families with either one or two earners (there are families with zero earners or more than two, but these two categories make up the bulk of families). This data is pretty cool because it goes all the way back to 1947.

This chart doesn’t look so good for one-earner families. After growing along with two-earner families in the 1950s and 1960s, it basically stagnates from the early 1970s until the late 2010s. Then you get a little growth. Not good!

I think more investigation is needed here, but the share of families that have two earners has grown dramatically, from 26 percent of families in 1947 to 42 percent in 2022. Single earner families shrunk from 59 percent to 31 percent, and dual-income families have been the most common family type since the late 1960s. There are some important compositional differences here in what types of families only have one earner. If we imagine some alternate history where, by law, only one spouse was allowed to work, certainly the single earner line would have risen more. And many of the single earner families today are single mothers, who for a variety of reasons have much lower earning potential than the fathers heading married couples in the 1950s and 1960s. So the numbers aren’t perfectly comparable.

Still, even for single earner families, real median income has more than doubled since 1947 — though most of that growth had happened by the early 1970s.

As we make our way through a challenging economic time following the pandemic and 2 years of unusually high inflation, hopefully we can look forward to a future of resuming the upward trajectory of incomes for all kinds of families.